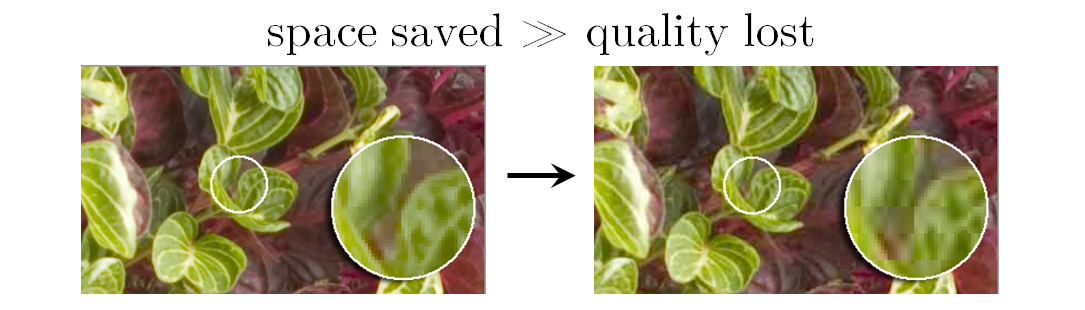

[1.2] Compressed sensing and single-pixel camerasThis'll be quick; the only part of the original blog post that I understood comfortably was the brief (and slightly inaccurate) explanation of traditional image compression. It's how image formats like JPEG can reduce the memory space needed by an image file drastically while losing only a bit of quality. A camera takes a photo of dimensions 1024 × 2048 by recording each of the roughly 2 million pixels. That makes for a lot of data, but it turns out that most of the data is redundant and can be thrown away if we can accept a small reduction in image quality. Many pictures have large areas with more or less the same color (e.g. blue sky, white table). Actually, the color may vary slightly but not enough for our eyes to notice the difference. We can partition the array of 2 million pixels into a patchwork of rectangles of pixels, where each rectangle has roughly the same color. Instead of storing each pixel with its color, we simply need to store the average color and the rectangle's size and position! Further discussionTao provides a more accurate and comprehensive picture of traditional image compression in his original blog post. He then goes into the main topic of compressed sensing, which aims to reconstruct the entire image (signal, data recording etc) by capturing only part of the image. He justifies the need for this ruthless efficiency in sensor networks with small power supplies. Applications include magnetic resonance imaging ("MRI scans"), astronomy, and recovering corrupted data. References[1.2] Tao, Terence. Compressed sensing and single-pixel cameras. In Structure and Randomness: Pages from Year One of a Mathematical Blog. American Mathematical Society (2008).

0 Comments

Leave a Reply. |

Archives

December 2020

Categories

All

|

RSS Feed

RSS Feed